In one sense, interactive experiences are about self-expression: many designers strive to express themselves through a narrative chosen to govern the game's fiction, while many players play games to express themselves in new and exciting environments. However, given the drastic limitations of UI (input) and AI technology, both designer and player are deprived of one of their most powerful means of expression: words.

By necessity, players have no voice - pre-recorded avatar blurbs do not count. Deprived of full control of their virtual "bodies" and muted, players can neither assume posture nor convey intention. Unable to negotiate, threaten, bluff, lie, their ability to act is limited by the very small of verbs we offer them: USE, SHOOT, CROUCH, RUN. It seems that whenever game designers debate the limits of their medium with respect to emotional engagement, somebody will bring up Infocom's text adventure Planetfall - with good reason.

In the real world, we wield the pen. In games, we do not even have a spraycan to protest our confinement with slogans and grafitti. In games, we rarely read, and never write. Imagine a set of interactive surfaces, message billboards scattered throughout a multiplayer level, accessible by QWERTY keyboards. Imagine messages and taunts not displayed in a HUD resembling the Windows desktop, but within the game. Restricting team communication to input and output locations might not always add to gameplay, but it adds to our toolset. Being able to leave messages for other players by placing them in space is a powerful device for any game with cooperative elements. Being able to leave markers and reminders for herself empowers the player to find her way back through the game spaces we create. Being able to maintain a log within the game allows a player to personalize their experience and elaborate their narrative. Any game with access to a keyboard can offer this option.

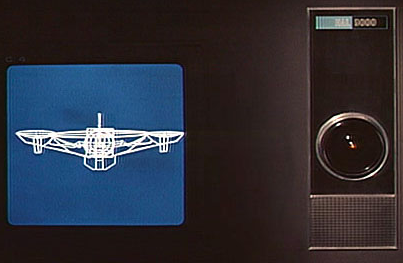

The early adventure games stood and fell with the quality of their parsers. Text-based interaction has its problems, but when it comes to interaction with NPCs, I believe text to be superior to any attempt to fake audiovisual humanity. The setup for the Turing Test was chosen for a reason. No in-game AI can offer the addictive elegance of Joseph Weizenbaum's ELIZA. Human NPCs talk, they do not listen. The only possible action is player reaction - a button press that moves the cursor forward in the script. Games like Thief feature a sophisticated conversation AI, but the player herself never converses except through manipulation of objects. The HAL-9000 computer in 2001, or the ancient enemy of Dean Koontz' "Phantoms" are more convincing than any game NPC. Imagine System Shock's Shodan implemented using Kenneth Colby's psychopathic PARRY. It is possible that such an entity might turn out more interactive, more engaging - more convincing - than Alyx Vance or Aki Ross.

Interactive Surfaces also allow us to open up the conversation. Whether through simulated bullets, button presses or typed text, player and NPC AI always communicate through the environment. It is possible to combine the semantic range and clarity of typed text with the broadcast range of a gunshot: imagine the player having to communicate with one or more NPCs through typed messages that, inside the computer or on the billboards, are intercepted by enemy AI. Feint and bait are now a possibility. If we seriously believe we can eventually write game AI offering challenging and meaningful conversation with AI, surely we will be able to do so better without the added complexity of speech recognition. If our ability to parse text is limited, it is still possible to limit the players vocabulary yet move the selection to an Interactive Surface existing inside the game.

If we continue to walk the Uncanny Valley in our quest to turn more and more of the game experience into a Turing Test, we are simply attempting to put Interactive Surfaces on human-shaped meshes. Convincing game AI stands and falls with the player's projection of her own intelligence onto whatever hollow shells we place in her view. This is what I call the Turing Trap: the more convinving our NPCs look, move, pose and talk, the less we will be able to let the player actually interact with them. Investing the same resources into an AI that requires much less of a "willing suspension of initiative" seems preferable. Human NPCs are an attempt to create a GUI with a face. In many ways, the cyclops eye of HAL-9000 is a matter better canvas to carry the player's imagination then the eye of the G-Man.

Text is the most compact representation of content we have found. If a significant amount of content in our game is simply text, this offers an opportunity for adding remote (e.g. episodic or broadcast) content to games. Hypertext was the foundation of the World Wide Web. Imagine the ability to render XML text from a remote web server to a local Interactive Surface, allowing us to retain control over compact (i.e. text or composite) content after shipping the game.

"Half-Life 2" as well as the upcoming "Prey" experiment with electronic pre-release delivery of game content. In the former case, unencrypted assets gave away some of the scripted content even before the executable was available to players. The ability to unlock content based on a global schedule allows for gaming events where disclosure is synchronized for everybody, an area of concern for major titles centered around a once-off play experience. In my view, remote content rendered straight to in-game surfaces is one of the key technologies for episodic gameplay. Incidentally, remote content is also essential for viable advertising in many multiplayer situations.

If a significant amount of the in-game interaction is text-based, this will also facilitate new game mechanism, e.g. indirect cooperation. Players in separate single-player sessions or on separate servers can interact with a single outside entity (be it AI or a paid-for game master), yet participate in a single exchange with the remote AI - a forum implemented inside a game. Over the last decade we have figured out solutions to maintain social networks and cooperation across the web: Interactive Surface allow us to access the same web-based solutions from inside a game. Imagine Myst with a Wiki accessible to every player attempting to uncover the secrets. Imagine the trappings of an Alternate Reality Game implemented within the game's fiction. Imagine players in a procedural maze, combining their in-game diaries to an in-game walkthrough.